Many quality standards require calibration. This is to reduce measurement risk. But how does calibration reduce measurement risk?

How do you know if your calibration provider has been successful at reducing measurement risk? This article will explore what is measurement risk and the key drivers of measurement risk. It will explain exactly what calibration is, how it mitigates risk, and the different calibration deliverables. A proper calibration strategy is critical to reducing your testing costs.

Measurement decisions and risk

We make measurements to help us make decisions. Measurement decisions come in many forms depending on your job function.

A validation team determines to rework or complete a design. A manufacturing team decides to either ship or fail each individual product. A scientist will confirm or invalidate a theory.

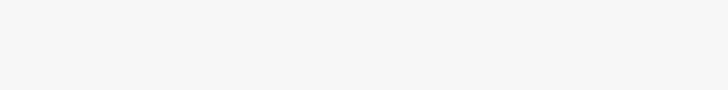

Measurement risk is the probability of making an incorrect decision. The possible outcomes in your measurement process are: a correct pass, a correct fail, a false pass, and a false fail. In your role, you might have slightly different terminology, but the decisions are the same.

A correct pass is everyone’s favourite decision; we can move forward in your design or manufacturing process. A failure is your measurement system correctly identifying a problem. A false pass is a flawed process, which can lead to additional warranty costs, product recalls, or even loss of life. A false failure is halting the process without real need. False failures can delay time to market or create additional scrap and rework costs.

Measurement risk is directly related to the consistency, accuracy and repeatability of your measurements. In metrology, we use a term called measurement uncertainty to calculate risk. Measurement uncertainty is taking all the possible errors and combining them to produce a standard deviation. We can then use statistics to calculate risk.

Contributors to measurement uncertainty

Measurement error comes in four general sources: intrinsic, environmental, installation, and operational. Limitations or inaccuracies of the measurement instrument cause intrinsic errors. Environmental errors come from the instrument’s surroundings.

Installation error considers all errors caused by everything hooked up to your instrument. Finally, the operational components are measurement issues caused by the engineer or technician operating the equipment. For each of these segments we should consider both short-term and long-term measurement uncertainty sources.

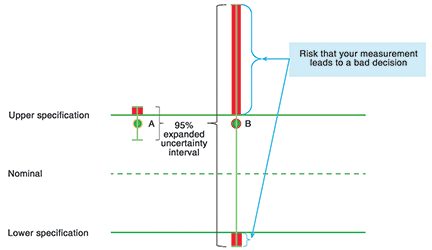

Instrument performance comes from three primary sources: short-term repeatability, instrument wear, and component ageing. Statistical dispersion or short-term repeatability is easily measured by making a series of measurements and calculating the standard deviation. Usage causes instrument wear. The analog electrical components in the test equipment will drift. Voltage and power measurements often drift in a Gaussian random walk, which means performance can change rapidly. Crystal oscillators will often drift in a linear fashion.

Environmental error includes temperature, altitude, and humidity. Temperature is usually the biggest source of error. To reduce the impact caused by electronations in temperature (electronation is defined as the transfer of an electron to a neutral molecule to form an anion), the general rule of thumb is to keep electronic instruments within ±5°C, unless noted otherwise. For other test equipment, such as gage blocks, temperature is the primary source of measurement uncertainty.

Installation error includes all connected accessories including cables, connectors, probes, and switch boxes. Power spikes or fluctuations in your electrical circuit erode your margins.

Human error occurs during the testing process. Standardising test software, training, and your measurement quality system mitigate any reproducibility problems you might see from human error.

Uncertainty growth and calibration

Over time, the intrinsic performance of instruments can change. While you do not know if they change for the better or worse, the uncertainty surrounding the performance increases. When the uncertainty surrounding your measurements increases, your ability to make consistent, accurate, and repeatable measurements decreases. Proper calibration accurately measures your test equipment’s performance. When you measure your instrument’s performance, the intrinsic uncertainty is the uncertainty of the calibration process.

The basic definition of calibration is measuring the performance of a test asset per the international metrology standards. It does not always include pass/fail decisions or adjustments. Different calibrations have different deliverables.

Calibration deliverables

Extent of testing

Different calibration providers offer different extent of coverage, as indicated by the number of tests run and points tested. The more thoroughly an instrument is tested, the greater the confidence in the calibration result. The original equipment manufacturer will suggest the parameters to test and the test points. No calibration standards specify the extent of testing required. To reduce costs, it is not uncommon for a calibration supplier to reduce the number of tests and points tested.

Calibration providers skip tests for one of three reasons:

1. The supplier does not have the equipment to perform the test.

2. The tests are complex, require specialised skills, or take a long time to complete or to develop the test methods.

3. The contractual price does not give time for all tests to be performed.

Example

One contract manufacturer sent several equivalent-time sampling oscilloscopes to a third-party maintainer (TPM). The TPM did not test the time base of the oscilloscopes.

Unfortunately, the time bases on several units had drifted and needed adjustment.

The contract manufacturer noticed an increase in rejects and contacted the original equipment manufacturer about the design failure. After several weeks of debugging the issue, they discovered that the test equipment needed adjustment.

Exception

For instruments used for a single application, only the specifications the end user requires needs to be measured. This strategy does not work for shared equipment. Since your calibration provider typically doesn’t know what specifications you depend upon, Keysight’s calibration policy is to measure every warranted specification, for every installed option, every time.

Data provided

Most importantly, data provided is proof of the extent of testing. However, it provides two additional roles. If a unit is measured out of tolerance, it is possible that you have been making bad measurements. The data is required to do an impact assessment. When measurement risk is high, some companies perform impact analysis for units measured in tolerance. Furthermore, the data can be used to see the trends in the instrument and predict future failures.

Another critical piece of information about your calibration is the measurement uncertainty, which is discussed in a following section on ‘Accuracy of test’.

Example

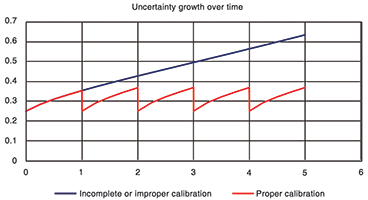

For the Keysight N5230C PNA-L network analyser, you might see a spike on the trace noise test. Once a spike occurs, it will grow.

In about 12 to 18 months, the analyser will be out of specifications requiring a repair.

Figure 3 shows an example of two spikes, one out of tolerance and the other about to go out of tolerance. This data allows you to better utilise your equipment by having planned downtime instead of unplanned downtime.

Accuracy of test

In the calibration world, the accuracy of the test is reported as expanded measurement uncertainty. You can estimate the measurement uncertainty in three ways.

The easiest way is to have the calibration provider generate it for you. The second way is to guess the measurement uncertainty based on the standards used. A rough estimate would be to use the instrument specification as the standard deviation. This accounts for the assumed rectangular distribution for specifications and for some of the uncertainty between the device under test and the instrument connector.

Finally, you can estimate the measurement uncertainty based on the company’s scope of accreditation. The biggest caveat to this option is that many companies do not use the equipment audited for the scope of accreditation for regular calibration, so you must check the traceability report to confirm that the proper standards are used.

Example

We found one third-party calibration lab using a legacy HP 8340B synthesised sweeper to measure a Keysight E4440A PSA spectrum analyser. The measurement uncertainty reported in their scope of accreditation was ±3,6 dB despite the ±0,24 dB specification limit (at 50 MHz). This led to a specific measurement risk of 89%.

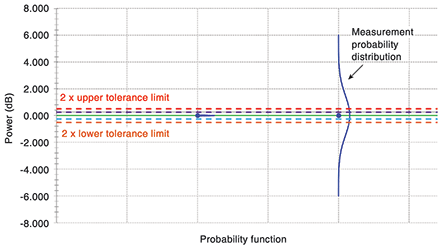

Guardbanding and adjustments

Guardbanding is one technique used to reduce measurement risk. The guardband is the offset between the acceptance limit and the specification. In most cases, the acceptance limit is tighter than the specification to limit the risk of false passes. The trade-off is an increase in false failures.

Adjustments alter the instrument’s performance, so it is as close to nominal as possible. Adjustments for legacy analog instruments simply required turning a potentiometer with a screwdriver. Today, adjustments require very accurate instruments, automated procedures, and access to internal field-programmable gate arrays (FPGAs). Not every calibration supplier can provide these sophisticated adjustments. If the supplier cannot provide an adjustment, any specification considered out of tolerance is treated as a repair.

Conclusion

If you want to reduce measurement errors, it is important to regularly calibrate your instruments. Proper calibration lowers cost of test by reducing the number of incorrect decisions made, by improving instrument performance. When evaluating your calibration supplier consider their expertise, the extent of testing, the data they provide, their measurement accuracy and their ability to perform adjustments.

| Tel: | +27 12 678 9200 |

| Email: | [email protected] |

| www: | www.concilium.co.za |

| Articles: | More information and articles about Concilium Technologies |

© Technews Publishing (Pty) Ltd | All Rights Reserved